How Young Consumers Really Feel About AI: Use, Trust, and Uncertainty

Published: May, 2026

Artificial intelligence has moved from a niche innovation to a mainstream part of everyday life for young Europeans (15-30 y.o.). The Youth Pulse Wave 5 findings indicate that this generation is already actively using AI tools, but remain cautious about its risks, especially regarding trust, identity, and long-term societal impact.

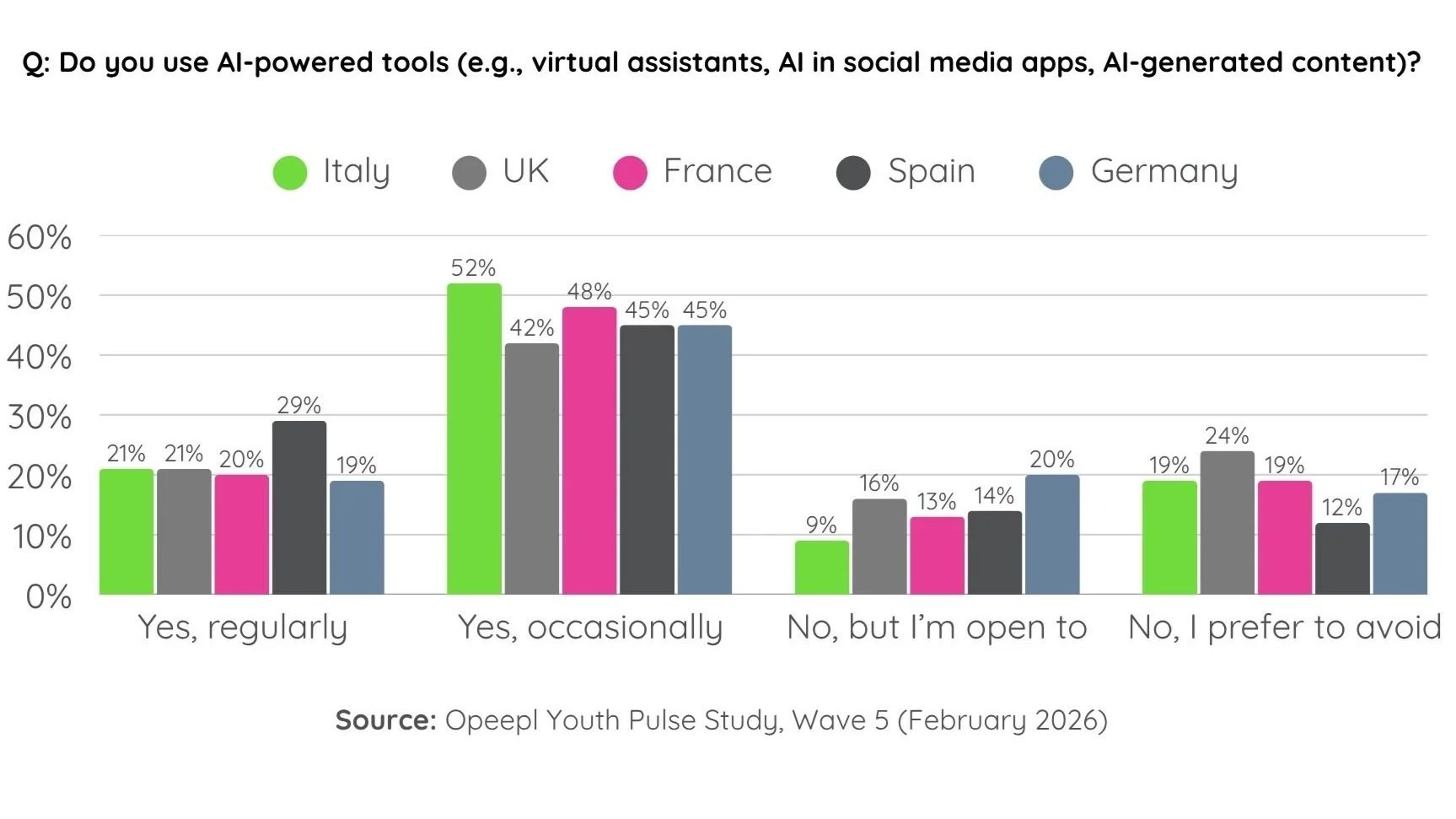

AI tool usage: already mainstream, but unevenly adopted

AI usage among European youth is now widespread. 67% already use AI-powered tools, meaning more than two-thirds of young consumers are interacting with AI in some form today. This positions AI not as a rising trend, but as a regular part of youth digital behaviour.

An additional 14% of youth has not adopted AI yet but are open to using it in the future. This indicates a significant group that has not yet engaged but is not resistant. On the other hand, 18% prefer to avoid AI altogether, showing a notable minority that is either skeptical, uninterested, or cautious about incorporating it into their lives.

When looking at usage intensity, differences appear between demographic groups.

Regular users: 24% of males vs 19% of females

Occasional users: 45% of males vs 47% of females

Avoiding AI: 16% of males vs 19% of females

Geography also influences behaviour. Spain stands out as a high-adoption market, where 29% of youth report regular AI use, well above the 20% average across other EU5 countries. This indicates a stronger acceptance of AI tools in daily digital routines. Germany, by contrast, shows more openness than active use. While fewer young Germans are currently regular users, 20% report not using AI yet but being open to it, compared with only 13% across other EU5 markets. This suggests potential for future growth rather than immediate adoption.

Trust in AI for important life decisions

When it comes to significant decisions like career advice or health recommendations, trust in AI drops significantly compared to general usage. Only 15% of youth would trust AI to make important life decisions, indicating that full delegation of crucial choices remains rare.

A larger group, 33%, shows limited trust in AI, stating they might rely on AI but would want human oversight or verification. This group has increased by 3 percentage points compared to Wave 4, suggesting a gradual shift toward hybrid decision-making models where AI supports rather than replaces human judgment.

Meanwhile, 42% of respondents explicitly prefer human judgment, making this the dominant position. This group has also increased slightly since Wave 4 (+2pp), reinforcing the idea that human authority remains central in decision-making.

Interestingly, uncertainty around using AI for decision-making is declining. Only 10% are unsure whether they would trust AI with important life decisions, which is down 5 percentage points from the Youth Pulse Study Wave 4. This suggests that youth are forming clearer opinions about AI’s role in decision-making, even if those opinions lean toward caution or skepticism.

Overall, the data shows a clear hierarchy of trust: AI is acceptable as an advisor, conditionally acceptable with oversight, but still largely rejected as an autonomous decision-maker in important life contexts among youth.

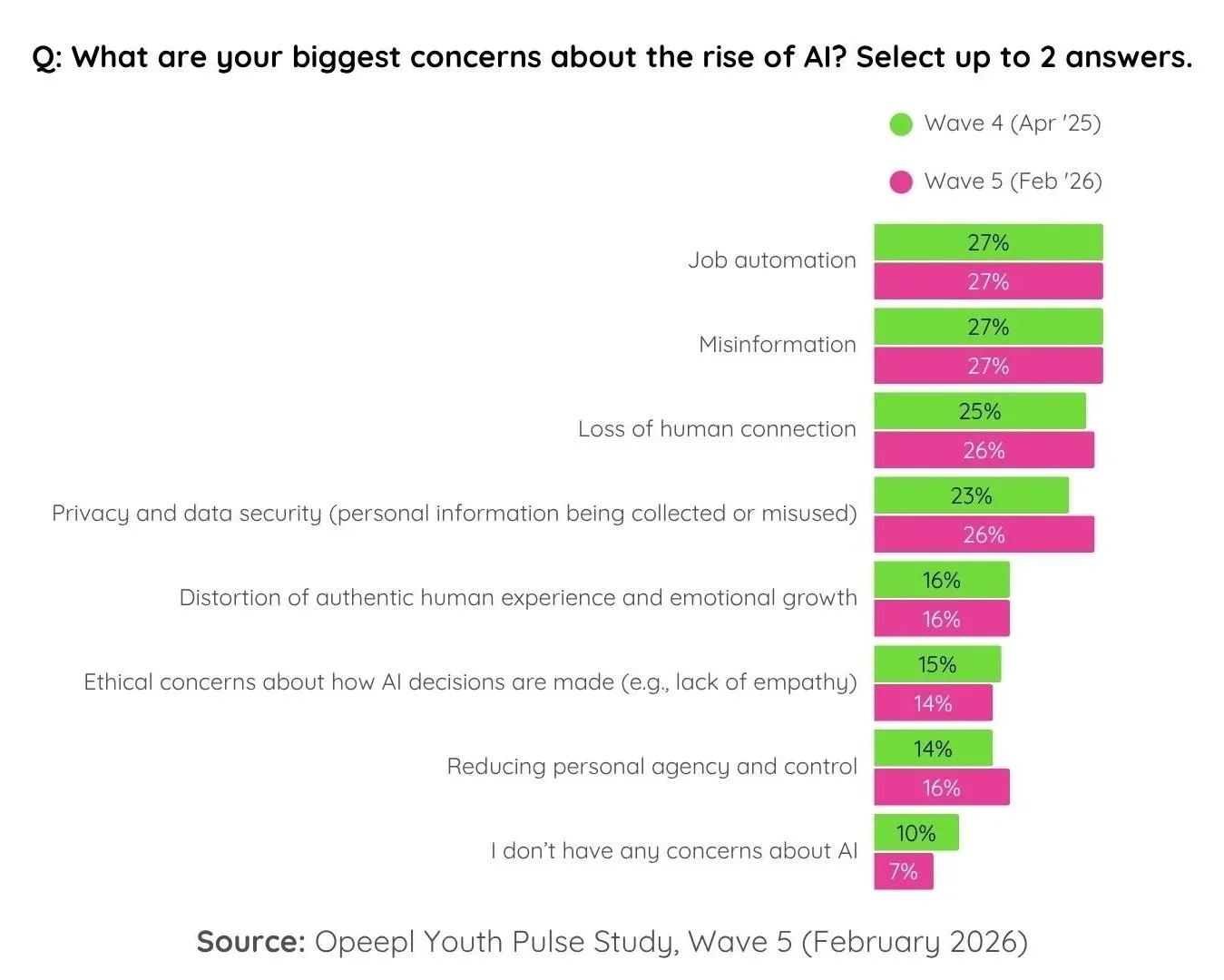

Top concerns about AI: employment risks and misinformation

Concerns about AI are widely distributed across multiple areas, with no single dominant fear. Instead, youth express a complex set of anxieties that reflect both practical and existential considerations.

The most prominent concerns are tied at 27% each, with both job automation (AI replacing human work) and misinformation (fake or biased content) leading the list. These two concerns highlight both economic insecurity and questions about information reliability in an AI-driven world.

Close behind, 26% of youth are worried about loss of human connection, highlighting fears that increasing automation and digital mediation may weaken interpersonal relationships. Another 26% are concerned about privacy and data security, which has risen by 3 percentage points compared to Wave 4, indicating growing sensitivity around personal data usage and surveillance.

Beyond these top concerns, 16% worry about distortion of authentic human experience and emotional growth, while another 16% are concerned about reduced personal agency and control. These concerns point toward deeper psychological and autonomy-related anxieties rather than purely practical risks.

Additionally, 14% express ethical worries about how AI makes decisions, particularly regarding a lack of empathy and transparency. Finally, only 7% report no concerns at all, a number that has decreased by 3 percentage points since Wave 4, reflecting a broader increase in AI skepticism among youth over time.

Overall, the concern landscape shows that youth do not view AI risk through a single lens. Instead, they evaluate it through economic, social, informational, ethical, and psychological dimensions.

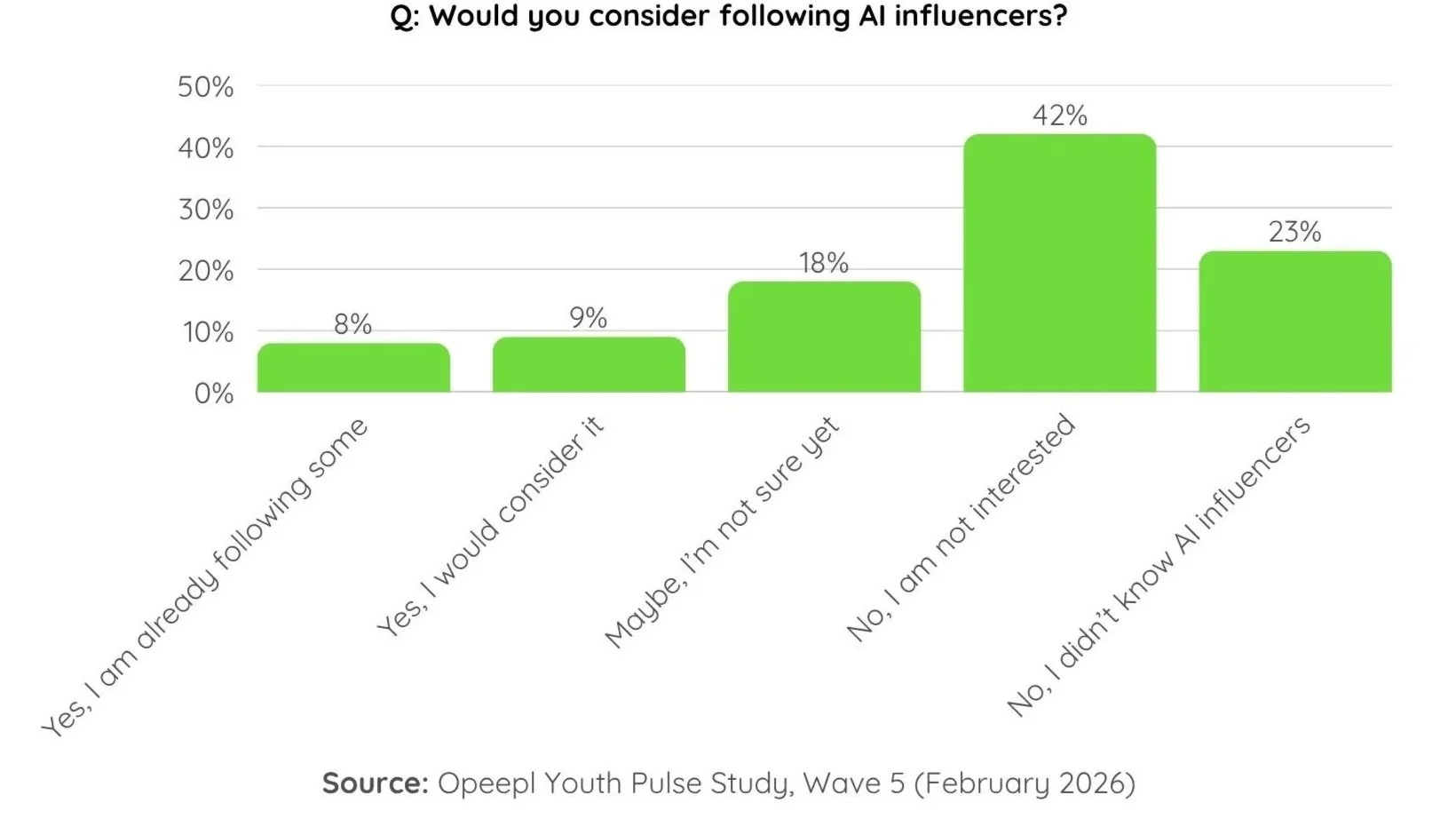

AI influencers: low adoption and limited trust

AI influencers, which are virtual personalities generated through artificial intelligence, remain a relatively unfamiliar and underutilised phenomenon among European youth:

8% currently follow AI influencers, indicating very limited mainstream adoption

9% would consider following them

18% are unsure

42% have no interest

23% were not even aware that AI influencers exist prior to the survey.

This lack of awareness shows that AI influencer culture has not yet fully penetrated mainstream youth attention, even in digitally active populations.

Trust in AI influencers is similarly limited. Only 31% express some level of trust, but this is mostly conditional. Just 5% fully trust AI influencers, while 26% would only trust them if content is verified or fact-checked. On the other side, skepticism prevails: 28% do not really trust AI influencers, and another 28% do not trust them at all.

This creates a clear credibility gap, where even among those who engage with AI influencers, trust is fragile and highly reliant on external validation.

Finally, attitudes toward brand use of AI reflect broader skepticism. Only 6% feel very comfortable with brands using AI, while a significant 36% report being not comfortable at all. This reinforces the idea that AI in commercial or persuasive contexts triggers stronger resistance than AI used as a tool.

Conclusion: high adoption, low trust equilibrium

Across all dimensions, the Youth Pulse W5 data shows a consistent pattern in how European youth relate to AI. Usage is already widespread and embedded in daily behaviour, but trust remains limited and conditional, especially in socially influential contexts.

Youth are not rejecting AI. Instead, they are integrating it selectively, accepting its utility while maintaining clear boundaries around autonomy, decision-making, and authenticity. Concerns are broad and multifaceted, covering jobs, misinformation, privacy, and human connection, while emerging AI-native phenomena like AI influencers are still struggling to gain both awareness and credibility.

The result is a stable but cautious balance: AI is widely used, moderately accepted, and carefully constrained by human oversight and trust boundaries.

Discover more about personal and social causes among youth in our latest Youth Pulse Report

Discover more trends among 15-30 y.o. in Youth Pulse Report

Opeepl Youth Pulse is a bi-annual study that keeps pulse on the latest developments in the youth market. Discover key youth trends in consumer confidence, media habits, attitudes, values, and four major categories: Food, Beverages, Fashion, and Personal Care.